MIP as Opportunity for Probabilistic Thinking

Music, which is represented in a symbolic or acoustic form, is a random variable from a stochastic process. A composer happens to make a musical score according to a musical theory and a performer happens to makes a music signal from a musical score. These stochastic “generation” processes inherently yield infinitely many possible hypotheses in uncertain “inference” processes that aim to induce a musical theory from a musical score or transcribe a musical score from a music signal. The composition and theorization processes or the performance and transcription processes is the two sides of the same coin and all these processes are mutually dependent through a musical score, the symbolic representation of music.

Using music as a good example, I want to emphasize the importance of associating the symbolic and acoustic representations of music and introduce probabilistic thinking, a universal approach to computational modeling of human intelligence. In music transcription tasks, I present how to “stochastically” and “statistically” deal with music by formulating a latent variable model and deriving a posterior inference algorithm. I also present how to enrich this classical principled framework by using deep learning techniques. The key advantage of this approach enables unsupervised or semi supervised learning, which would play an essential role for overcoming the robustness, generalizability, and scalability limitations of recent Music Information Processing (MIP) methods based on supervised learning of DNNs. The fact that such deep Bayesian learning (not Bayesian deep learning) can be applied to various research fields (e.g., probabilistic robotics) would encourage students, researchers, and engineers to learn “probabilistic” MIP.

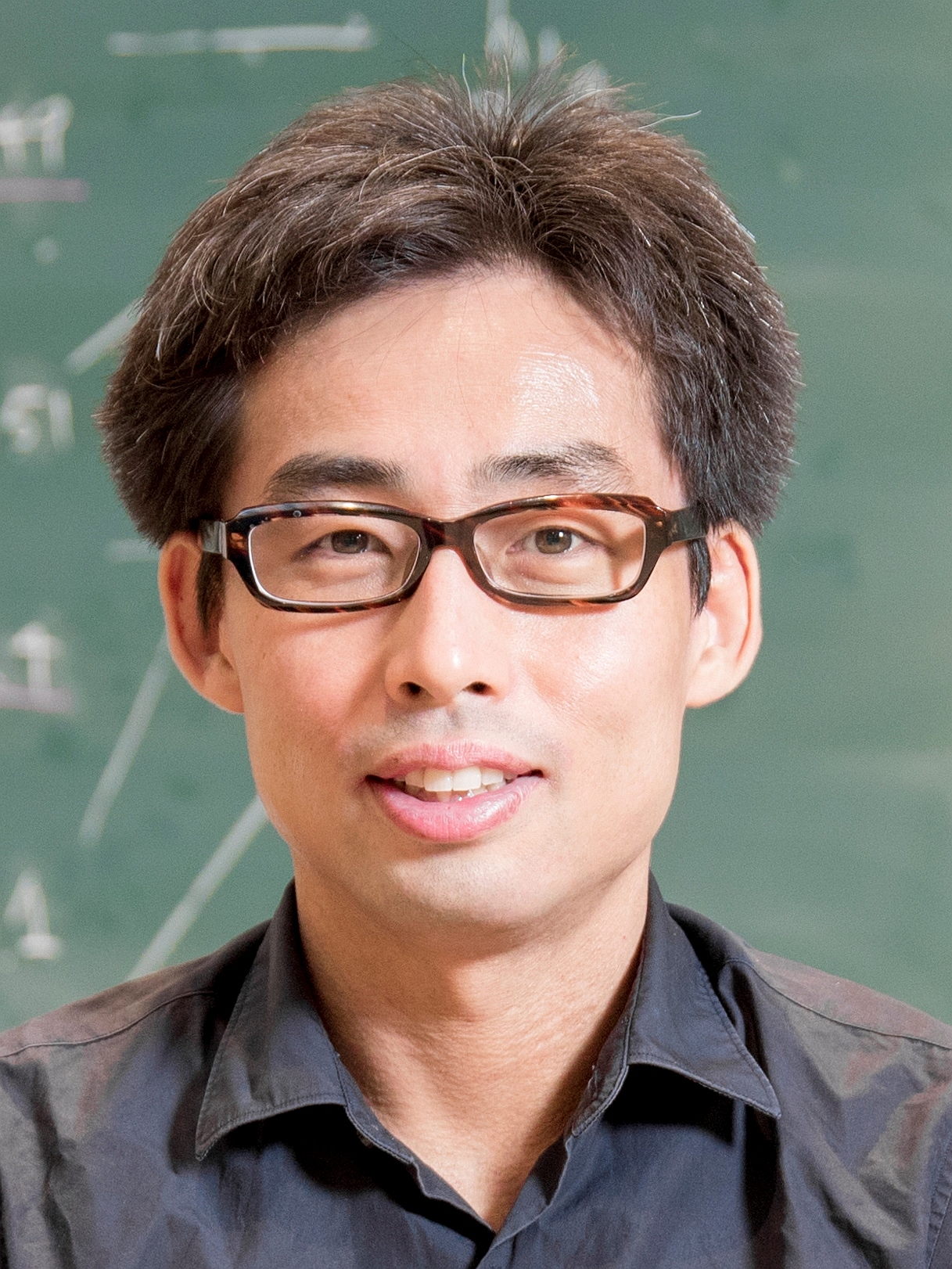

About the speaker:

Kazuyoshi Yoshii received the M.S. and Ph.D. degrees in informatics from Kyoto University, Kyoto, Japan, in 2005 and 2008, respectively. He is an Associate Professor at the Graduate School of Informatics, Kyoto University, and concurrently the Leader of the Sound Scene Understanding Team, Center for Advanced Intelligence Project (AIP), RIKEN, Tokyo, Japan. His research interests include music informatics, single-channel and multichannel audio signal processing, and statistical machine learning.